The Physiological Signal

Every time your heart beats, it pushes a wave of blood through your arteries and into the capillaries beneath your skin. That wave of blood changes how much light your skin absorbs versus reflects. The change is invisible to the naked eye, but it is measurable by a camera.

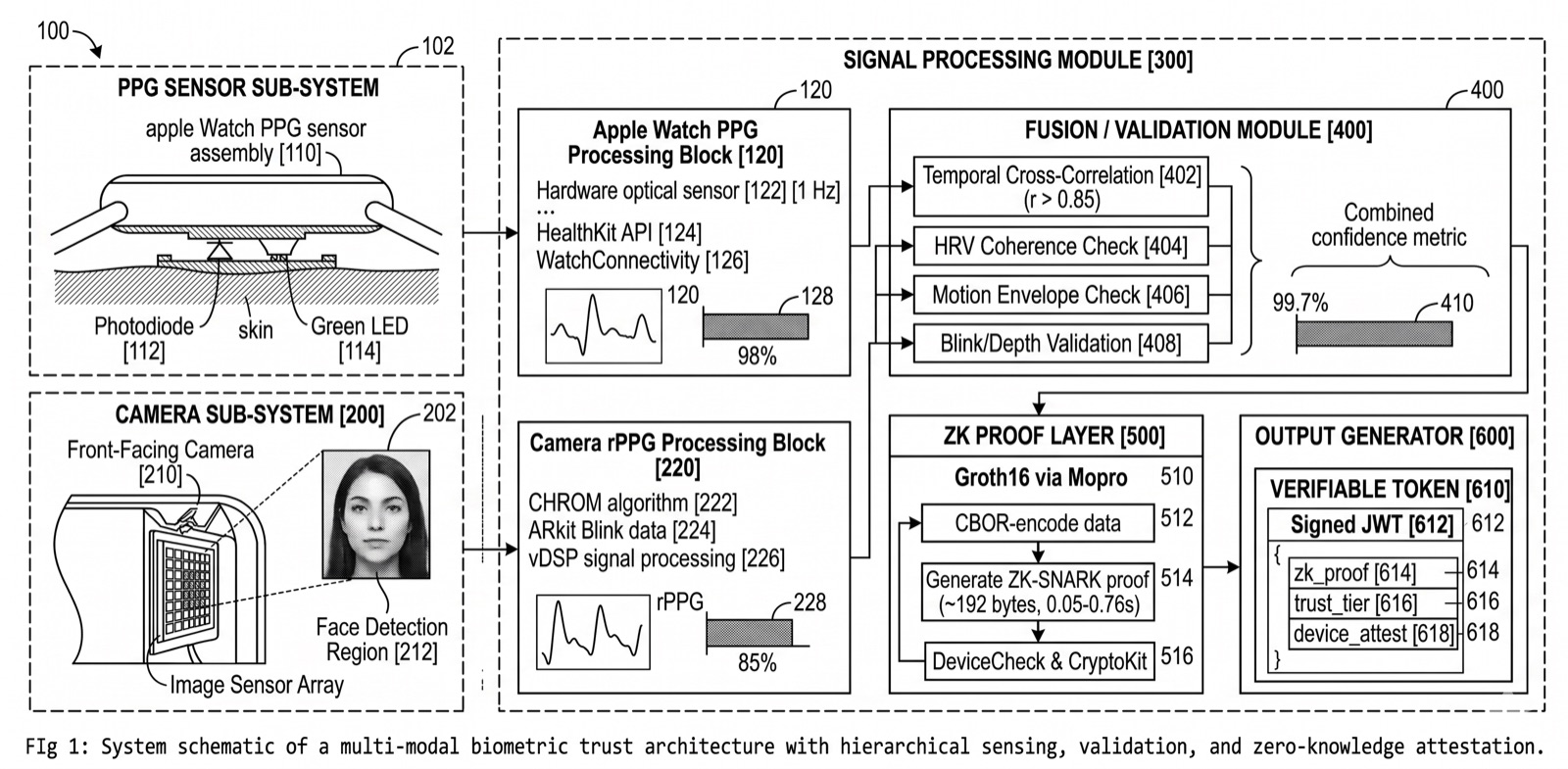

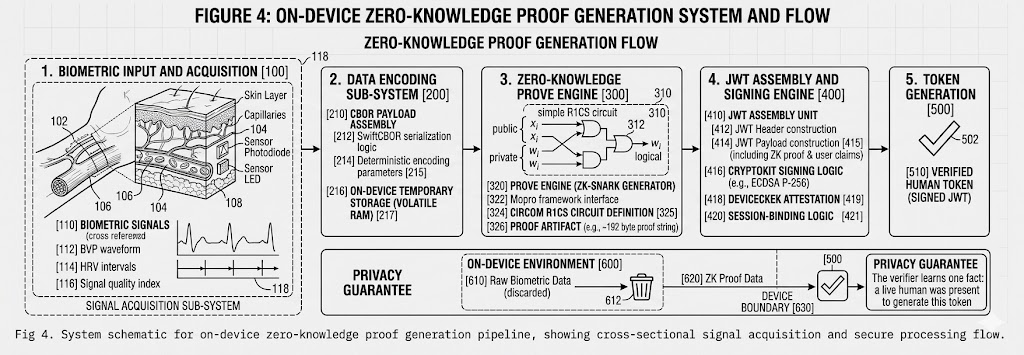

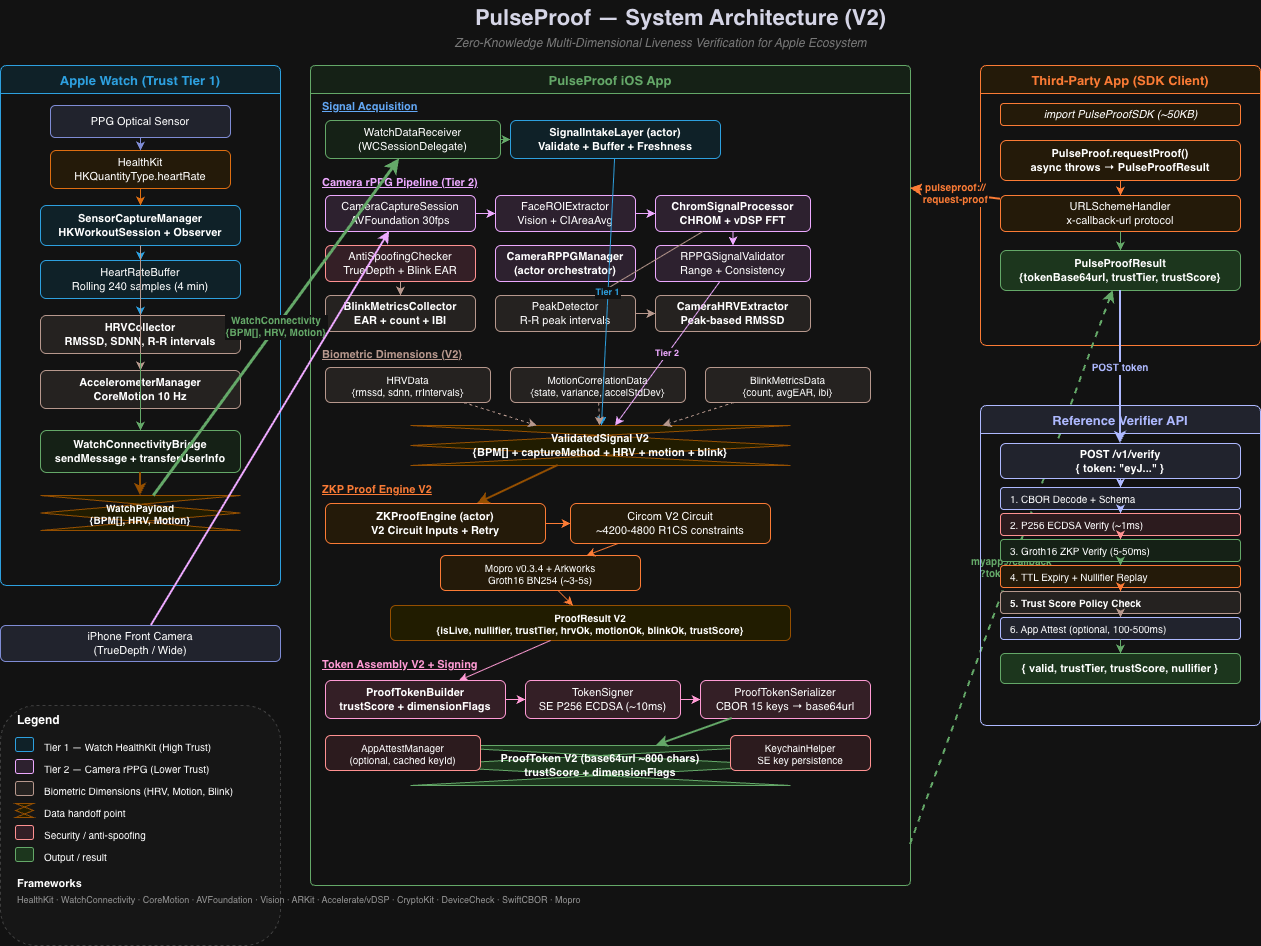

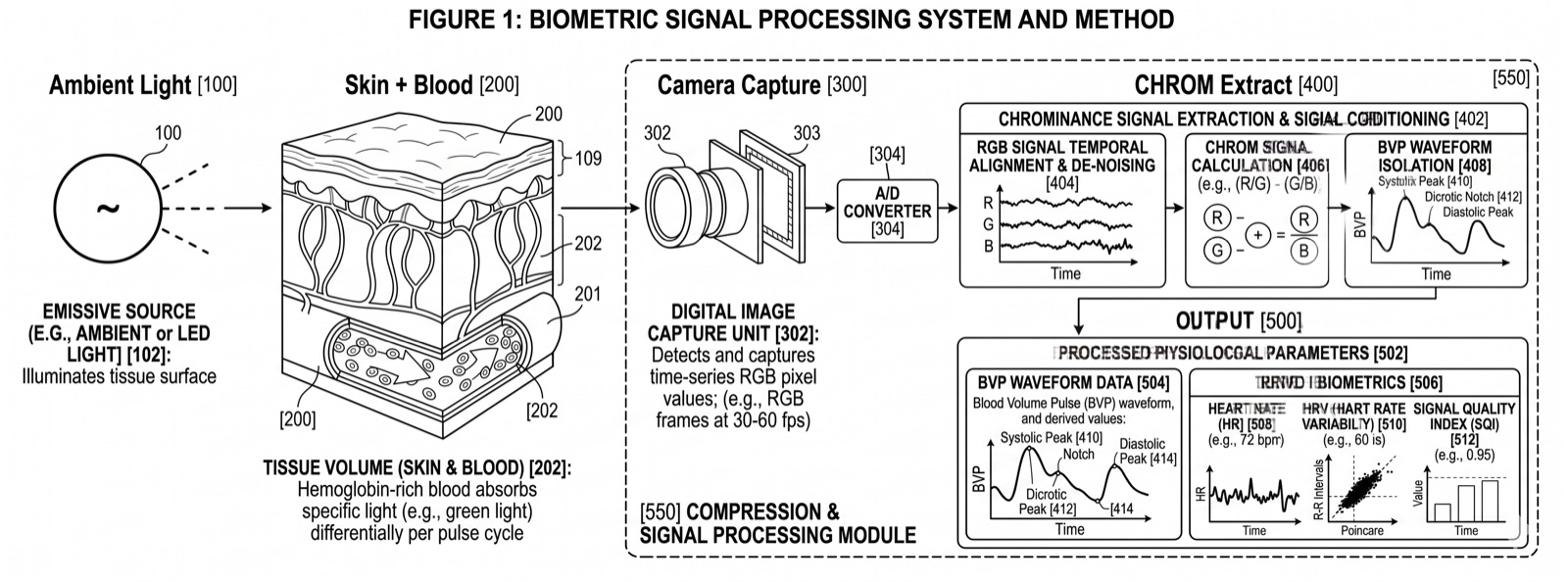

Remote photoplethysmography (rPPG) captures these sub-pixel color fluctuations from ordinary RGB video. By analyzing the green, red, and blue channels of each video frame across time, signal processing algorithms extract a waveform that corresponds to your cardiac cycle. This is the blood volume pulse (BVP), and it carries the same timing information as a clinical pulse oximeter or ECG lead.

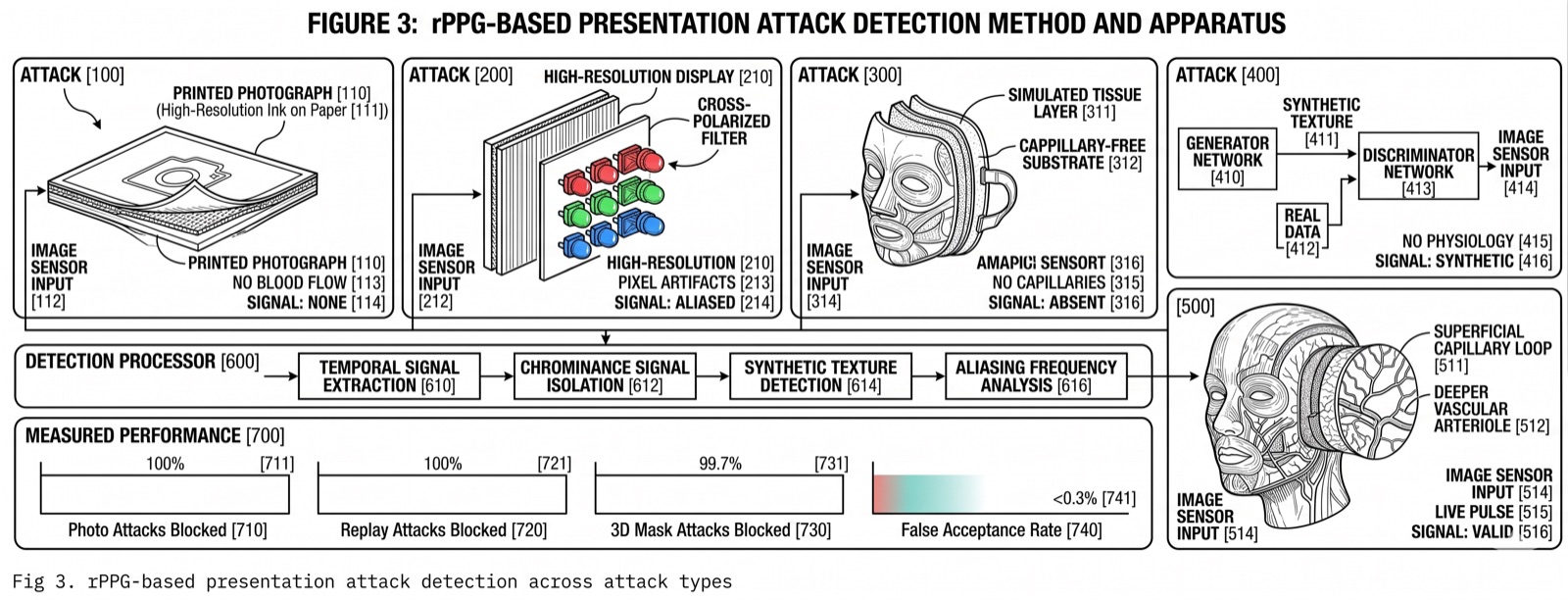

Key insight: The cardiac signal is a physiological fact. No AI model generates a heartbeat. No photograph produces a blood volume pulse. No screen replay creates the time-varying hemodynamic changes that rPPG measures. This is why PulseProof uses it as the foundation for proof of humanity.

How It Works

When light hits your face, some of it is absorbed by hemoglobin in your blood and some is reflected back. During systole (when the heart contracts), more blood fills the capillaries, increasing absorption and decreasing reflected light. During diastole (when the heart relaxes), blood volume drops and more light is reflected. This creates a periodic oscillation in the green channel that directly tracks your pulse rate.

The signal is strongest in the green wavelength (around 520-580nm) because hemoglobin has a strong absorption peak there. Red and blue channels carry complementary information that helps separate the cardiac signal from noise sources like ambient light changes, subject motion, and camera sensor noise.

Published Evidence

A 2025 PRISMA-compliant systematic review by Debnath and Kim in BioMedical Engineering OnLine analyzed 145 peer-reviewed articles spanning 2008 to 2025. The review covered signal processing foundations (ICA, PCA, CHROM, GREEN channel methods), supervised machine learning approaches, and end-to-end deep learning architectures including CNNs, 3D CNNs, LSTMs, Transformers, and GANs.

The key finding: deep learning end-to-end models substantially outperform traditional signal processing across all major benchmark datasets. The improvement is consistent across lighting conditions, motion artifacts, and skin tone variation.

| Model | Architecture | Dataset | MAE (bpm) | RMSE (bpm) |

|---|---|---|---|---|

| Dual-GAN | Generative Adversarial Network | UBFC-rPPG | 0.44 | 0.67 |

| PhysFormer++ | Temporal Transformer | MAHNOB-HCI | 3.25 | 3.97 |

| Meta-rPPG | CNN-LSTM | PURE | 3.01 | 3.68 |

| EfficientPhys | Lightweight CNN (3.6 MB) | UBFC-rPPG | 1.15 | 1.80 |

| TS-CAN | Temporal Shift CNN | UBFC-rPPG | - | 2.27 |

| RTrPPG | Ultra-light 3D CNN | UBFC-rPPG | - | ~2.5 |

| CHROM | Conventional (chrominance) | Philips (117 subj.) | ~3-5 | ~4-7 |

These benchmark datasets (UBFC-rPPG, PURE, MAHNOB-HCI, VIPL-HR, COHFACE, MMPD) span controlled lab settings and unconstrained real-world conditions. Sub-beat-per-minute precision from a consumer camera, no physical contact required.

Read the full systematic review on PubMed CentralReferences

- Debnath, U. & Kim, S. (2025). A comprehensive review of heart rate measurement using remote photoplethysmography and deep learning. BioMedical Engineering OnLine, Springer Nature. DOI: 10.1186/s12938-025-01405-5

- Verkruysse, W., Svaasand, L. O., & Nelson, J. S. (2008). Remote plethysmographic imaging using ambient light. Optics Express, 16(26), 21434-21445.

- De Haan, G. & Jeanne, V. (2013). Robust pulse rate from chrominance-based rPPG. IEEE Transactions on Biomedical Engineering, 60(10), 2878-2886. DOI: 10.1109/TBME.2013.2266196